Mercury 2: The LLM That Doesn't Generate Like an LLM

Prefer video? Watch the full tutorial with code walkthroughs.

Watch VideoTL;DR

Inception Labs shipped the first reasoning model built on diffusion instead of autoregressive generation. Over 1,000 tokens per second, competitive benchmarks, and a fundamentally different approach to how AI generates text.

Read next

Diffusion Language Models: How Mercury Changed the LLM Speed Game

Inception Labs launched Mercury, the first commercial-grade diffusion large language model. It generates over 1,000 tokens per second on standard Nvidia hardware by replacing autoregressive generation with a coarse-to-fine diffusion process.

7 min readDeepSeek R1 and V3: The Developer's Guide to Open-Source AI

DeepSeek's R1 and V3 models deliver frontier-level performance under an MIT license. Here's how to use them through the API, run them locally with Ollama, and decide when they beat closed-source alternatives.

9 min readClaude vs GPT for Coding: Which Model Writes Better TypeScript?

Claude Opus 4.7 vs GPT-5.5 for real TypeScript work. Benchmarks, pricing, model families, and practical differences.

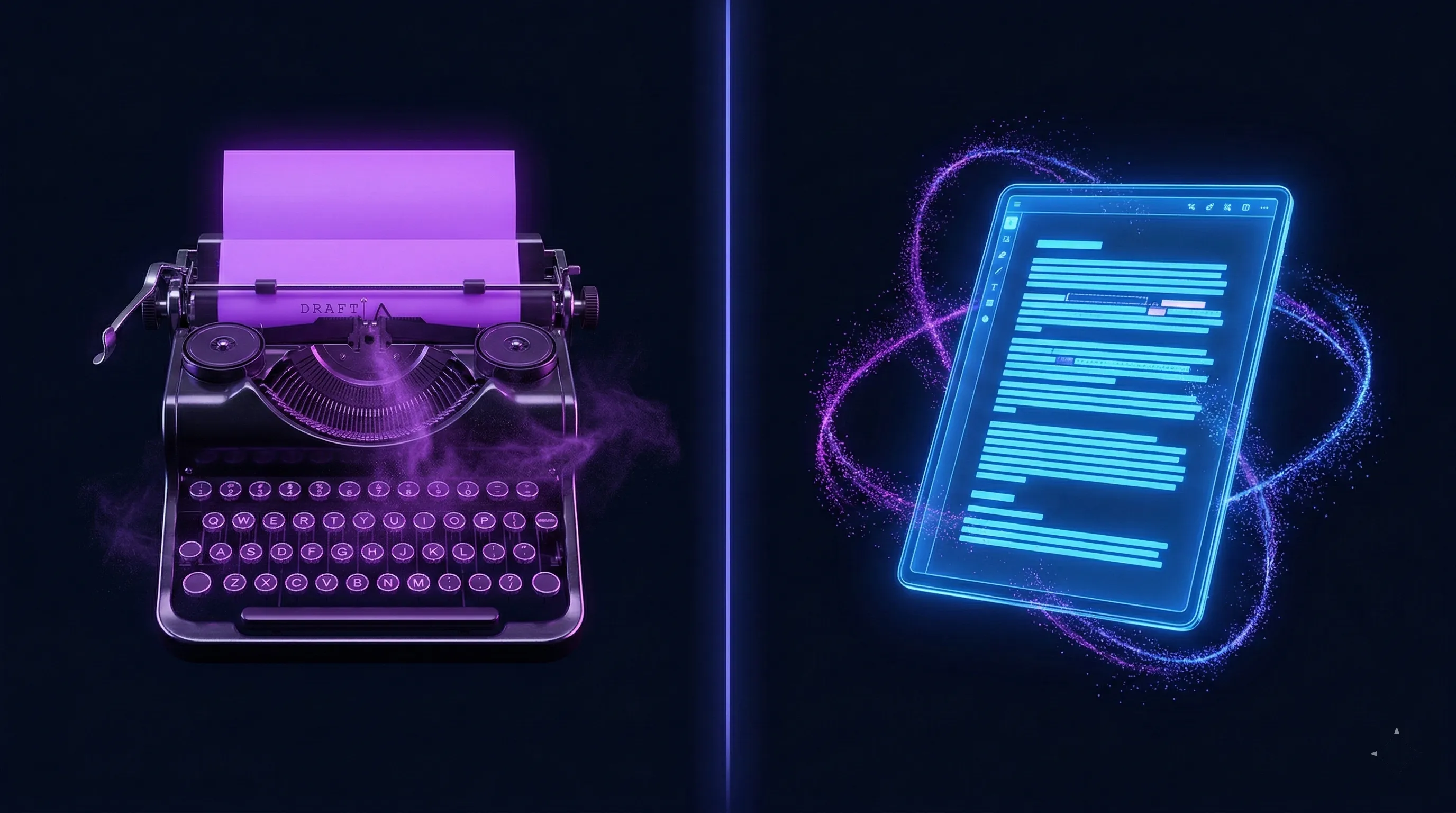

5 min readEvery LLM you use today is a typewriter. One token at a time, left to right, each keystroke permanent. If the reasoning drifts early, tough luck. It can only move forward.

Mercury 2 is an editor. It starts with a rough draft and sharpens the whole thing with each pass. And it does this at over 1,000 tokens per second.

Inception Labs just shipped the first reasoning model built on diffusion instead of autoregressive generation. The same fundamental approach that already won in image and video generation, now applied to language. And the results are real.

The Speed Problem Nobody Actually Solved

Remember when Groq hit the scene? Raw inference speed got everyone excited. But the models that could run that fast were limited. They couldn't do tool calling well. They struggled with complex reasoning. Lower benchmark scores across the board. Speed at a real cost.

For model-selection context, compare this with Claude vs GPT for Coding: Which Model Writes Better TypeScript? and OpenAI vs Anthropic in 2026 - Models, Tools, and Developer Experience; the useful question is not only benchmark quality, but where the model fits in a real developer workflow.

The entire industry has been racing to solve this since. OpenAI, NVIDIA, Fireworks, Baseten. Billions spent on better hardware, better kernels, quantization, distillation. Real gains, but all incremental. Everyone squeezing more out of the same autoregressive paradigm.

Mercury 2 took a different path. The speed comes from the model itself, not infrastructure optimization.

How Diffusion LLMs Actually Work

Autoregressive generation: token one locks before token two begins. Sequential. Permanent. If you make a mistake early, it cascades through everything that follows.

Diffusion generation: start with noise, iteratively refine the entire output in parallel. Multiple tokens per forward pass. Built-in error correction because the model revisits and refines as it goes.

This is actually closer to how humans think. You don't reason word by word. You hold the whole idea, draft, revise, reconsider, then commit. CMU researchers found in September 2025 that diffusion models are "significantly more robust to data repetition" than autoregressive models, especially in data-constrained settings. The academic community is taking this architecture seriously: the LLaDA paper introduced diffusion as a viable alternative to autoregressive text generation and has been gaining traction.

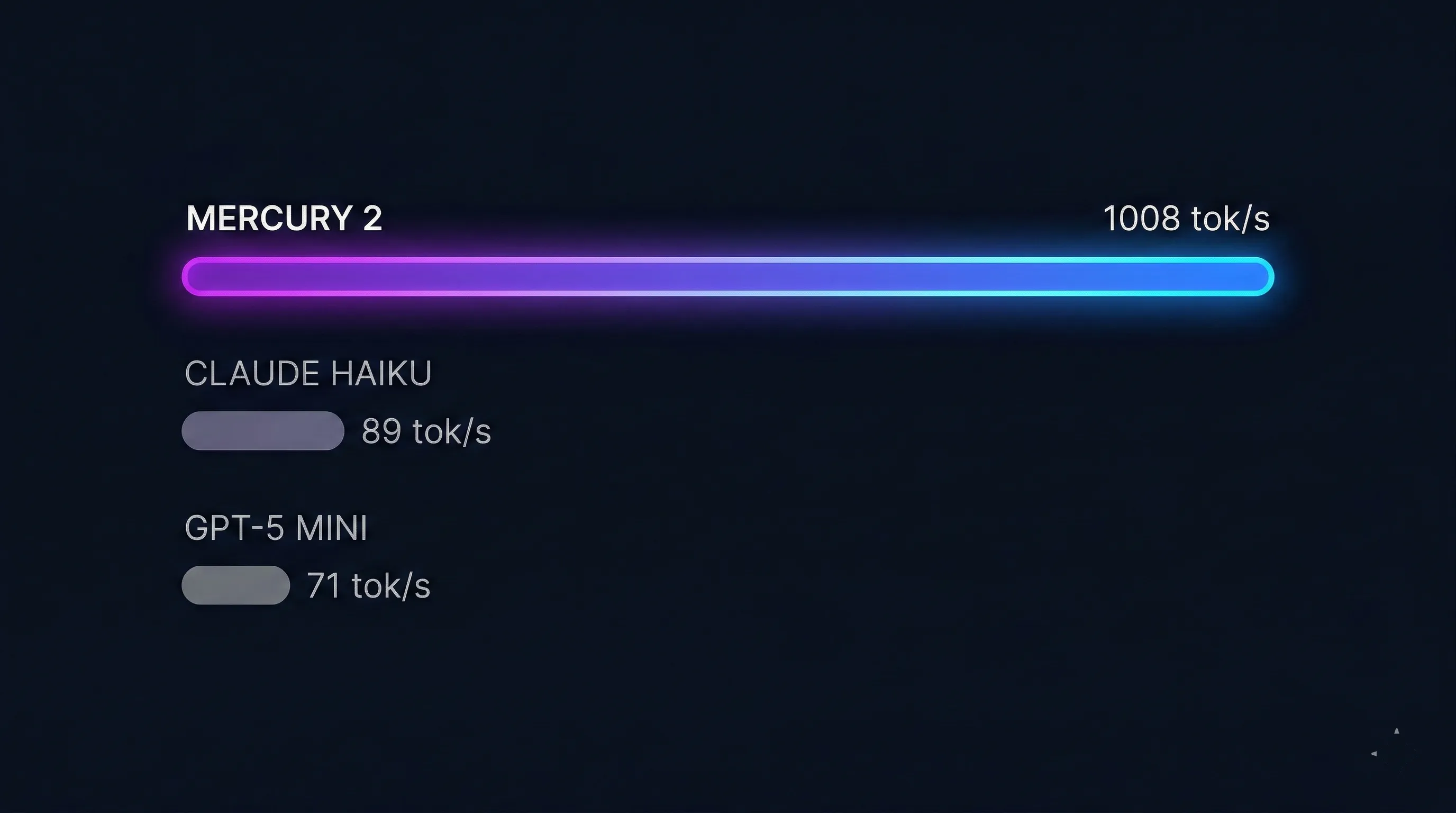

The throughput numbers tell the story:

| Model | Output Throughput |

|---|---|

| Mercury 2 | 1,008 tok/s |

| Claude Haiku 4.5 | ~89 tok/s |

| GPT-5 mini | ~71 tok/s |

That's over 10x throughput. On reasoning tasks specifically, 5x faster than speed-optimized autoregressive models.

Get the weekly deep dive

Tutorials on Claude Code, AI agents, and dev tools - delivered free every week.

From the archive

Claude Code Worktrees: Parallel Development Without the Chaos

Feb 21, 2026 • 6 min read

Claude Sonnet 4.6: Approaching Opus at Half the Cost

Feb 19, 2026 • 6 min read

Claude Opus 4.6: Anthropic's Smartest Model Gets Agent Teams

Feb 9, 2026 • 8 min read

Why Claude Code Won: Unix Philosophy Meets AI Agents

Jan 19, 2026 • 12 min read

Quality Didn't Get Sacrificed

Speed without quality is just fast garbage. Mercury 2 holds up:

| Benchmark | Mercury 2 | GPT-5 mini |

|---|---|---|

| AIME 2025 | 91.1 | 91.1 |

| GPQA | 73.6 | Competitive |

| LiveCodeBench | 67.3 | Competitive |

| IFBench | 71.3 | -- |

| SciCode | 38.4 | -- |

Important context: these comparisons are against speed-optimized models, not frontier models. Mercury 2 plays in the speed + reasoning lane. It's not trying to beat Opus on raw intelligence. It's trying to give you reasoning-grade quality at speeds that unlock entirely new application patterns.

Worth noting: Mercury v1 (early 2025) had real limitations. ACI.dev's beta review flagged hallucination issues and a 16K context ceiling. Mercury 2 is a significant leap: 128K context, native tool use, and tunable reasoning. The gap between v1 and v2 is large enough that early criticism doesn't map cleanly to the current model.

Where 1,000 tok/s Actually Matters

Three use cases where this speed changes what you can build:

Agent Loops

Latency compounds across multi-step workflows. Every tool call, every reasoning step adds wait time. In a demo app built for the video, Mercury 2 ran search, scrape, and summarize before most models would finish their first response. Code agents, browser automation, IT triage: more steps, tighter feedback cycles. Skyvern is already using it in production and reports Mercury 2 is "at least twice as fast as GPT-5.2."

Voice and Real-Time

p95 latency determines if a voice interface feels natural or robotic. Support agents, voice bots, real-time translation. When you need reasoning inside tight SLAs, speed isn't a nice-to-have. Companies like Wispr Flow (real-time transcript cleanup), OpenCall (voice agents), and Happyverse AI (real-time voice/video avatars) are already shipping with Mercury under the hood.

Coding Workflows

The prompt-review-tweak loop. Rapid succession iteration. The faster the model responds, the more you stay in flow. Zed, the code editor, integrated Mercury and described it as "suggestions land fast enough to feel like part of your own thinking." JetBrains published research arguing diffusion models "better reflect how developers think" because they edit and refine rather than writing left-to-right.

Drop-In Compatible

Mercury 2 is OpenAI API compatible. Swap the base URL, model string, and API key. Works with any framework that supports OpenAI's format.

- 128K context window

- Tool use, structured outputs, RAG

- Reasoning effort dial: instant, low, medium, high

- $0.25/M input tokens, $0.75/M output tokens

That pricing makes it one of the most cost-competitive reasoning models available. For high-volume agent workloads where you're making hundreds of calls per session, the economics are compelling.

Who Built This

Inception Labs isn't a random startup. CEO Stefano Ermon is a Stanford CS associate professor who co-authored DDIM (the denoising method powering Stable Diffusion and Midjourney). His co-founders Aditya Grover (UCLA) and Volodymyr Kuleshov (Cornell) are both former students. The team includes veterans from DeepMind, Meta, OpenAI, Microsoft, and HashiCorp.

Backed by $50M from Menlo Ventures, M12 (Microsoft), NVentures (NVIDIA), Snowflake Ventures, and Databricks. Individual investors include Andrew Ng and Andrej Karpathy. Fortune 100 companies (unnamed) are already running Mercury in production. Available on Azure AI Foundry.

The people who proved diffusion works for pixels are now proving it works for tokens.

The Bigger Question

Whether diffusion becomes the future of how all LLMs work is an open question. But the trajectory is clear. Autoregressive generation has a fundamental speed ceiling that no amount of hardware can fully overcome. Diffusion solves that at the model level.

Mercury 2 is the proof point. Fast enough to change what you can build. Cheap enough to actually use at scale. And backed by the people who literally wrote the math.

Try it yourself:

- API Platform - start building

- Playground - test it live

This article is based on a Developers Digest video sponsored by Inception Labs. All technical claims are sourced from third-party benchmarks and direct testing.

Further Reading:

- Inception Labs: Introducing Mercury 2 - official announcement

- CMU: Diffusion Beats Autoregressive in Data-Constrained Settings - academic backing

- JetBrains: Why Diffusion Models Could Change Developer Workflows - developer perspective

- LLaDA: Large Language Diffusion with mAsking (arxiv) - the foundational paper

- ACI.dev: Thoughts on Mercury API - honest early critique of v1

Watch the Video

Share

Suggest an editSave

Developers Digest

Technical content at the intersection of AI and development. Building with AI agents, Claude Code, and modern dev tools - then showing you exactly how it works.

300+ videos30K+ GitHub stars50+ articles

Related Tools

Local AI

Jan

Open-source ChatGPT alternative that runs 100% offline. Desktop app with local models, cloud API connections, custom ass...

View ToolProductivity

NotebookLM

Google's AI notebook that lets you ground a Gemini chat in your own uploaded sources. Generates summaries, mind maps, an...

View ToolAI Coding328K views

GitHub Copilot

The original AI coding assistant. 77M+ developers. Inline completions in VS Code and JetBrains. Copilot Workspace genera...

View ToolAI Coding

Aider

Open-source AI pair programming in your terminal. Works with any LLM - Claude, GPT, Gemini, local models. Git-aware ed...

View ToolApps from Developers Digest

Developer Tools

Voice

Talk, get text. A Mac dictation app that doesn't waste your words.

View AppDeveloper ToolsIn Progress

agentfs

Give your agents a filesystem that branches like git. Crash-safe by default.

View AppDirectories

AI Models

Pick a model in 30 seconds. Built for the answer, not the marketing.

View AppRelated Guides

Guide

Getting Started with DevDigest CLI

Install the dd CLI and scaffold your first AI-powered app in under a minute.

Getting StartedGuide

Claude Code Setup Guide

Configure Claude Code for maximum productivity -- CLAUDE.md, sub-agents, MCP servers, and autonomous workflows.

AI AgentsGuide

MCP Servers Explained

What MCP servers are, how they work, and how to build your own in 5 minutes.

AI AgentsRelated Posts

7 min read

Diffusion Models

Diffusion Language Models: How Mercury Changed the LLM Speed Game

Inception Labs launched Mercury, the first commercial-grade diffusion large language model. It generates over 1,000 toke...

9 min read

DeepSeek

DeepSeek R1 and V3: The Developer's Guide to Open-Source AI

DeepSeek's R1 and V3 models deliver frontier-level performance under an MIT license. Here's how to use them through the...

5 min read

Claude

Claude vs GPT for Coding: Which Model Writes Better TypeScript?

Claude Opus 4.7 vs GPT-5.5 for real TypeScript work. Benchmarks, pricing, model families, and practical differences.

8 min read

Claude Code

Create Beautiful UI with Claude Code: The Style Guide Method

AI-generated interfaces tend to look the same - gradient-heavy, emoji-laden, and generic. The style guide method gives y...

7 min read

OpenAI

GPT-5: OpenAI's Most Capable Model

GPT-5 introduces a fundamentally different approach to inference. Instead of forcing developers to manually configure re...

14 min read

Claude Code

How to Use Claude Code with Next.js

A practical guide to using Claude Code in Next.js projects. CLAUDE.md config for App Router, common workflows, sub-agent...

Get Smarter About AI Dev

New tutorials, open-source projects, and deep dives on coding agents - delivered weekly.

One email per weekReal code, not theoryFree forever