Progressive Disclosure: How Claude Code Cut Token Usage by 98%

Prefer video? Watch the full tutorial with code walkthroughs.

Watch VideoClaude Code Mastery

20 parts- 1Claude Code: The Future of Coding?

- 2What Is Claude Code? The Complete Guide for 2026

- 360 Claude Code Tips and Tricks for Power Users

- 4Claude Code Sub Agents: Parallel AI Development

- 5Claude Code Worktrees: Parallel Development Without the Chaos

- 6Building Multi-Agent Workflows in Claude Code: A Practical Tutorial

- 7The Ralph Loop: Running Claude Code For Hours Autonomously

- 8Self-Improving Skills: Claude Code That Learns From Every Session

- 9Claude Code Usage Limits in 2026: The Practical Playbook for Pro and Max Teams

- 10Progressive Disclosure: How Claude Code Cut Token Usage by 98%Current

- 11Claude Code + Chrome: AI Agents That Use Your Browser

- 12Interview Mode: Let Claude Code Ask the Questions First

- 13Claude Code: Remote Control, Auto Memory, Plugins & More

- 14Claude Code Hooks Explained

- 15How to Use Claude Code with Next.js

- 16Claude Code Channels: Control Your Coding Agent from Telegram and Discord

- 17How to Migrate from GitHub Copilot to Claude Code

- 18Building a SaaS with Claude Code: End-to-End Guide

- 19Create Beautiful UI with Claude Code: The Style Guide Method

- 20Claude Code Loops: Recurring Prompts That Actually Run

TL;DR

CloudFlare, Anthropic, and Cursor independently discovered the same pattern: don't load all tools upfront. Let agents discover what they need. The results are dramatic.

Read next

Claude Skills: A technical deep dive into Anthropic's new approach to AI context management

A comprehensive look at Claude Skills-modular, persistent task modules that shatter AI's memory constraints and enable progressive, composable, code-capable workflows for developers and organizations.

8 min read60 Claude Code Tips and Tricks for Power Users

The definitive collection of Claude Code tips - sub-agents, hooks, worktrees, MCP, custom agents, keyboard shortcuts, and dozens of hidden features most developers never discover.

25 min readWhat Is Claude Code? The Complete Guide for 2026

Claude Code is Anthropic's terminal-based AI agent that ships code autonomously. Complete guide: install, CLAUDE.md memory, MCP, sub-agents, pricing, and workflows.

6 min readIn September 2025, CloudFlare published a blog post titled "Code Mode: The Better Way to Use MCP." It contained a single, devastating observation: we've been using MCP wrong.

The problem wasn't theoretical. When you load MCP tool definitions directly into an LLM's context window, you're forcing the model to see every available tool for every request, whether it needs them or not. Most of the time, those tools sit idle, burning tokens for nothing.

CloudFlare's insight was radical: models are excellent at writing code. They're not great at leveraging MCP. So why not let the model write TypeScript to find and call the tools it needs instead of embedding all the schemas upfront?

Three months later, Anthropic and Cursor both arrived at identical conclusions independently. The pattern has a name: progressive disclosure.

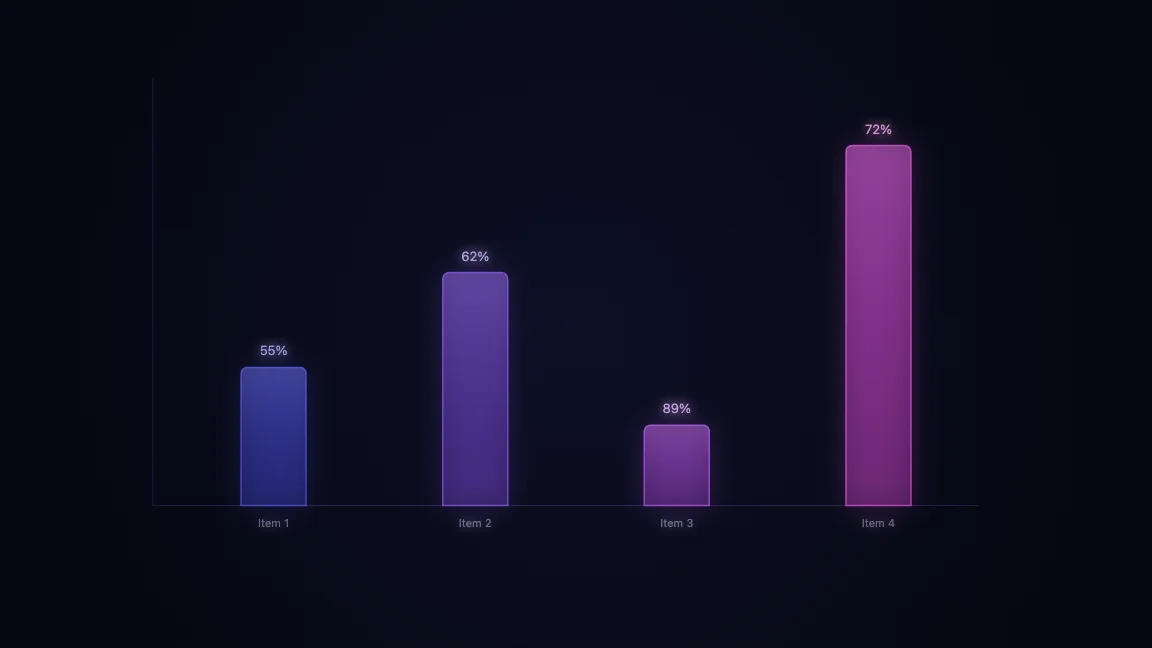

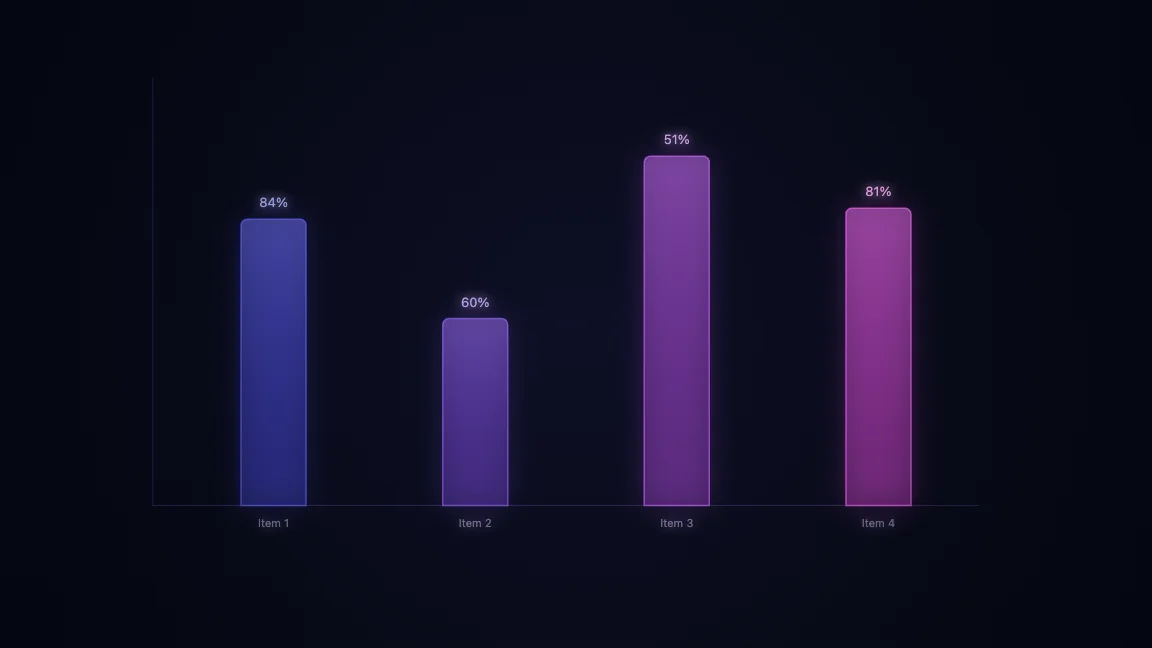

The Numbers Don't Lie

For the next layer of context, read Claude Code Agent Teams, Subagents, and MCP: The 2026 Playbook and Why Skills Beat Prompts for Coding Agents in 2026; they show how reusable agent knowledge turns one-off wins into repeatable workflow.

Anthropic's tool search feature shows the math clearly. Using a full MCP tool library with traditional context loading consumed 77,000 tokens. With tool search - discovering tools on demand - that dropped to 8,700 tokens. That's an 85% reduction while maintaining access to the entire tool library.

Accuracy improved too. In MCP evaluations:

- Opus 4: 49% → 74%

- Opus 4.5: 79.5% → 88.1%

Cursor reported similar wins. By implementing dynamic context discovery, they achieved a 46.9% reduction in total agent tokens. One week later, CloudFlare dropped their findings: a 98.7% reduction in token usage using TypeScript sandboxes instead of MCP schemas.

This isn't incremental optimization. This is a paradigm shift.

The Shift from GPUs to Sandboxes

Six months ago, the industry obsessed over inference speed and GPU efficiency. The conversation has moved. CloudFlare, Anthropic, Vercel, Cursor, Daytona, and Lovable are all converging on the same infrastructure: sandboxes, file systems, and bash.

The pattern is elegant. Instead of tokenizing every tool definition, you give agents three things:

- A file system (read, write, search)

- Bash (execute commands, run scripts)

- Code execution (call MCP servers on demand)

The agent's job becomes simple: discover what you need, load it, use it. No context bloat. No unused tool schemas. No wasted tokens.

How to Build This in Claude Code

Claude Code implements progressive disclosure through skills. A skill is a YAML file with frontmatter (the summary) and references to actual scripts and markdown files (the implementation).

Here's the pattern:

---

name: "Web Research"

description: "Search and summarize web content using Firecrawl"

---

Get the weekly deep dive

Tutorials on Claude Code, AI agents, and dev tools - delivered free every week.

From the archive

Self-Improving Skills: Claude Code That Learns From Every Session

Jan 5, 2026 • 7 min read

Interview Mode: Let Claude Code Ask the Questions First

Jan 1, 2026 • 5 min read

Claude Code + Chrome: AI Agents That Use Your Browser

Dec 31, 2025 • 7 min read

Continual Learning in Claude Code: Memory That Compounds

Dec 30, 2025 • 7 min read

Usage

Call this skill when you need current web information.

Implementation

- [[firecrawl.sh]] - Core search and scraping

- [[research-template.md]] - Output format

The agent sees only the frontmatter in context (10-30 tokens). When it invokes the skill, it reads the full implementation - and only then. Scale to 1,000 skills, 10,000 skills, and the static context cost remains flat.

You can nest skills hierarchically. A skill can reference sub-skills. An agent can walk the directory structure, find what it needs, and load only that.

## Advanced Tool Use: Memory and Code Execution

Anthropic's advanced tool use releases included two other pieces that complete the picture:

**Programmatic Tool Calling:** Tools don't return raw results anymore. They execute in a code environment, so the agent can inspect output, transform it, chain operations - all without leaving context.

**Memory Tool:** Not embeddings. Not vector databases. Just files. Markdown documents stored in the file system, read and updated as needed. Simple. Searchable. Manageable.

The principle extends to Claude Code. Instead of complex vector retrieval, read sections of files on demand. Update a `memory.md` when something matters. Let the agent grep, grep, find. It works.

## What This Enables

Before progressive disclosure, agent tasks had to be small and contained. You watched token limits. You minimized tool use. You feared the context reset.

Now:

- **Multi-hour workflows** without context resets

- **Hundreds or thousands of tool integrations** available instantly

- **Complex orchestration without orchestration logic** - if the system can look up tools and skills, it handles complexity

- **Autonomous systems** that run for extended periods

- **Context is no longer the bottleneck**

## The Experimental MCP CLI Flag

CloudFlare and Anthropic's approach inspired an experimental feature in Claude Code: the MCP CLI flag. When enabled, instead of embedding all MCP schemas in context, the model uses tool search to discover and invoke servers on demand.

Is it perfect? Not yet. It's actively being refined. But the direction is clear: zero context cost for tool discovery. Tens of thousands of tokens saved per request.

## The Convergence

What's remarkable is that CloudFlare, Anthropic, Cursor, and others arrived here independently. No coordination. Same conclusion: **tools as files, loaded on demand, bash is all you need.**

This wasn't what anyone predicted six months ago. It's counterintuitive. Most of us assumed you'd load everything up front. But the data is overwhelming.

The industry is converging on the same answer: progressive disclosure works.

## Build Boldly

If you've been cautious about Claude Code's scope because of context limits, stop. The bottleneck just moved. File systems, bash, and progressive disclosure unlock agents that can tackle ambitious, complex work without the orchestration overhead that held us back before.

Give the agent a file system. Get out of the way. Let it discover what it needs. The results speak for themselves.

---

## Further Reading

- **[CloudFlare Code Mode](https://blog.cloudflare.com/code-mode/)** - How TypeScript sandboxes beat MCP schema bloat

- **[Anthropic Advanced Tool Use](https://www.anthropic.com/engineering/advanced-tool-use)** - Tool search, programmatic calling, memory tools

- **[Cursor's Dynamic Context Discovery](https://cursor.com/blog/dynamic-context-discovery)** - 46.9% token reduction in practice

- **[Claude Code Skills](https://code.claude.com/docs/en/skills)** - Implementation guide

## Watch the Video

<iframe width="100%" height="480" src="https://www.youtube.com/embed/DQHFow2NoQc" frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture" allowfullscreen title="Progressive Disclosure in Claude Code"></iframe>

## Frequently Asked Questions

### What is progressive disclosure in Claude Code?

Progressive disclosure is a pattern where AI agents discover and load tools on demand rather than having all tool definitions embedded in the context window upfront. Instead of burning tokens on unused tool schemas, the agent uses a file system, bash, and code execution to find and invoke only the tools it needs for each specific task.

### How much does progressive disclosure reduce token usage?

The reductions are dramatic. Anthropic reported an 85% reduction (from 77,000 tokens to 8,700 tokens) using tool search. CloudFlare achieved a 98.7% reduction using TypeScript sandboxes instead of MCP schemas. Cursor reported a 46.9% reduction in total agent tokens with dynamic context discovery.

### Why does progressive disclosure improve accuracy?

When models see fewer irrelevant tools in context, they make better decisions about which tools to use. Anthropic's evaluations showed accuracy improvements from 49% to 74% on Opus 4, and from 79.5% to 88.1% on Opus 4.5 after implementing tool search.

### How do I implement progressive disclosure in Claude Code?

Use skills - YAML files with frontmatter summaries and references to implementation files. The agent sees only the frontmatter (10-30 tokens) in context. When invoked, it reads the full implementation. You can nest skills hierarchically and scale to thousands without increasing static context cost.

### What three things do agents need for progressive disclosure?

Agents need: (1) a file system to read, write, and search, (2) bash to execute commands and run scripts, and (3) code execution to call MCP servers on demand. This lets the agent discover, load, and use tools dynamically instead of loading everything upfront.

### Does progressive disclosure work with MCP servers?

Yes. Instead of embedding all MCP schemas in context, you can use tool search to discover and invoke MCP servers on demand. Claude Code has an experimental MCP CLI flag that implements this pattern, saving tens of thousands of tokens per request while maintaining access to the full tool library.

### What does progressive disclosure enable that wasn't possible before?

It enables multi-hour workflows without context resets, hundreds or thousands of tool integrations available instantly, complex orchestration without orchestration logic, and truly autonomous systems that run for extended periods. Context is no longer the bottleneck for ambitious agent tasks.

Share

Suggest an editSave

Developers Digest

Technical content at the intersection of AI and development. Building with AI agents, Claude Code, and modern dev tools - then showing you exactly how it works.

300+ videos30K+ GitHub stars50+ articles

Related Tools

AI Models

Claude Opus 4.7

Anthropic's flagship reasoning model. Best-in-class for coding, long-context analysis, and agentic workflows. 1M token c...

View ToolAI CodingDaily Driver

Claude Code

Anthropic's agentic coding CLI. Runs in your terminal, edits files autonomously, spawns sub-agents, and maintains memory...

View ToolProductivity

Codeburn

Interactive TUI dashboard that shows exactly where your Claude Code and Cursor tokens are going, in real time.

View ToolAI Coding

Zed

High-performance code editor built in Rust with native AI integration. Sub-millisecond input latency. Built-in assistant...

View ToolApps from Developers Digest

Developer ToolsPlus $20/mo

Skills Pro

Unlock pro skills and share private collections with your team.

View AppDeveloper ToolsPlus $20/mo

Hookyard Pro

Pro hooks for Claude Code. Private bundles, team sync, one-click install.

View AppDeveloper ToolsIn Progress

ctx-peek

Inspect Claude Code transcripts to see which files, tools, and tokens are filling the context window.

View AppRelated Guides

Guide

Claude Code Setup Guide

Configure Claude Code for maximum productivity -- CLAUDE.md, sub-agents, MCP servers, and autonomous workflows.

AI AgentsGuide

Claude Code Complete Course

A complete, citation-backed Claude Code course with setup, prompting systems, MCP, CI, security, cost controls, and capstone workflows.

ai-developmentGuide

Getting Started with Claude Code

Install Claude Code, configure your first project, and start shipping code with AI in under 5 minutes.

Getting StartedRelated Videos

Nimbalyst: The Open-Source Visual Workspace for Building with Codex and Claude Code

Nimbalyst Demo: A Visual Workspace for Codex + Claude Code with Kanban, Plans, and AI Commits Try it: https://nimbalyst.com/ Star Repo Here: https://github.com/Nimbalyst/nimbalyst This video demos N...

Video·

Composio: Connect OpenClaw & Claude Code to 1,000+ Apps via CLI

Composio: Connect AI Agents to 1,000+ Apps via CLI (Gmail, Google Docs/Sheets, Hacker News Workflows) Check out Composio here: http://dashboard.composio.dev/?utm_source=Youtube&utm_channel=0426&utm_...

Video·

Claude Code Channels in 8 Minutes

Anthropic has released Channels for Claude Code, enabling external events (CI alerts, production errors, PR comments, Discord/Telegram messages, webhooks, cron jobs, logs, and monitoring signals) to b...

Video·

Related Posts

8 min read

AI

Claude Skills: A technical deep dive into Anthropic's new approach to AI context management

A comprehensive look at Claude Skills-modular, persistent task modules that shatter AI's memory constraints and enable p...

25 min read

Claude Code

60 Claude Code Tips and Tricks for Power Users

The definitive collection of Claude Code tips - sub-agents, hooks, worktrees, MCP, custom agents, keyboard shortcuts, an...

6 min read

Claude Code

What Is Claude Code? The Complete Guide for 2026

Claude Code is Anthropic's terminal-based AI agent that ships code autonomously. Complete guide: install, CLAUDE.md memo...

9 min read

Claude Code

Claude Code Usage Limits in 2026: The Practical Playbook for Pro and Max Teams

A practical operational guide to Claude Code usage limits in 2026: plan behavior, API key pitfalls, routing choices, and...

8 min read

CLI

10 CLI Tools Reshaping AI Development in 2026

From Claude Code to Gladia, the ten CLIs every AI-native developer should know. Install commands, trade-offs, and when t...

6 min read

Claude Code

Claude Code Worktrees: Parallel Development Without the Chaos

Anthropic brought git worktrees to Claude Code. Spawn multiple agents working on the same repo simultaneously - no mer...

Get Smarter About AI Dev

New tutorials, open-source projects, and deep dives on coding agents - delivered weekly.

One email per weekReal code, not theoryFree forever